AI hallucination is a technology flaw that better models will eventually eliminate. The more accurate explanation: AI produces unreliable outputs when it’s asked to reason about things it has no real information on.

AI Hallucination is a Context Problem

In general-purpose tasks, hallucination rates for leading models hover between 10–20%. For complex domain-specific reasoning — the kind required to diagnose a multi-hop network failure — that number climbs further when the AI lacks grounding in the actual environment.

Network operations is a high-stakes domain for exactly this failure mode. A confident but wrong diagnosis sends engineers to the wrong devices. A plausible-sounding root cause that doesn’t match your topology (or solve the actual problem) wastes hours and erodes trust in the tool fast enough that the whole AI initiative stalls.

Grounding the AI in organization-specific operational context reduces hallucination rates by up to 71% compared to ungrounded approaches. The model doesn’t change. What changes is what the model has to work with.

The Four Network Questions AI Needs Answered Before It Can Help

Reliable AI-driven diagnosis requires answering four questions about your specific network before any reasoning begins. Each one maps to a distinct data layer and a distinct technology that provides it.

“What’s in my network now?”

The AI needs two things before it can investigate anything: a map of your network and a current read on how it’s behaving.

The map comes from the digital twin — every device, every interface, every path, continuously updated. When a problem comes in, the AI already knows which devices sit on the affected path. A problem statement like “voice quality issues between these two endpoints” resolves to a specific set of hops and interfaces before the AI runs a single command. Without this, the AI guesses at scope and hallucination follows.

Live state fills in what the map can’t tell you. Most monitoring tools poll on a cycle. By the time a ticket is opened, that data is stale. Transient conditions may have already cleared and left no trace. Automation fixes this by retrieving targeted CLI output and API responses from the live network on demand, at the moment the diagnosis runs, from the devices the topology layer already identified as relevant.

Together, these two layers (topology and live state) answer the first question any diagnosis requires: what is actually happening, on which devices, right now.

“What are the goals of my network?”

This is the layer most teams are missing, and the one that determines whether AI can evaluate what it finds.

Without a defined standard for what the network should look like, AI can describe a configuration but can’t assess it. It has no way to distinguish a deviation that’s an acceptable exception from one that will cause an outage under specific conditions. It reports what it sees. It can’t tell you if what it sees is wrong.

Intent is the encoded desired state and configuration of all of your network’s features: golden configurations, QoS policies, BGP prefix thresholds, application path requirements, compliance standards. It’s the operational “tribal” knowledge your best network architect carries in their head, made available to the machine as an executable automation.

With intent to rely on, AI shifts from descriptive to diagnostic. A QoS configuration difference between two devices becomes a policy violation against the encoded golden state, rather than an observation without context.

“What’s wrong?”

Only after the first questions are answered can AI do what it’s actually built for: reason across the data, identify the delta between actual and intended state, and work toward a conclusion.

An agentic system doesn’t answer once and stops. It plans a sequence of steps, executes the relevant automations, evaluates what comes back, and iterates. In one production deployment, this process traced voice degradation to a QoS misconfiguration on a backup path, surfacing a root cause in under four minutes that passive monitoring had not caught at all. The issue only appeared during failover. The AI found it because it knew the intended QoS policy, retrieved the actual configuration, and identified where the two diverged.

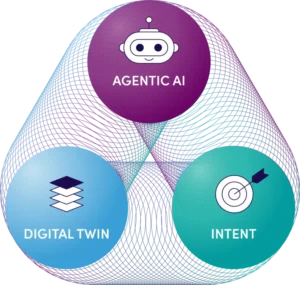

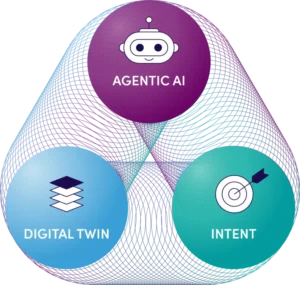

The Three-Layer Foundation for Context-Aware AI

These four questions draw on three technologies working together. Each one solves a different part of the context problem.

| Layer |

What It Provides |

What AI Does Without It |

| Digital Twin |

Live topology and inventory |

Guess at scope; hallucinate path and device context |

| Intent-Based Automation |

Desired state and golden standards |

Describe configurations; can’t evaluate them |

| Agentic AI |

Goal-driven reasoning across both layers |

N/A |

Digital Twin

A digital twin is a continuously updated model of your network — every device, every interface, every path, every dependency across on-premises, cloud, and hybrid environments. It auto-discovers the network and keeps that model current so that when a problem comes in, the AI already knows your topology without being told.

Its job in the context stack is straightforward: tell the AI what exists and how everything connects. That’s the scope layer. Without it, the AI has no way to know which devices are relevant to a given problem and has to either guess or apply generic heuristics.

Intent-Based Automation

Intent is where your network’s operational standards live. It encodes what the network is supposed to look like — golden configurations, QoS policies, BGP thresholds, application path requirements — and makes those standards available as executable automation that can retrieve and compare live data against them on demand.

This is what gives AI a benchmark. A QoS policy on a backup path is just data. A QoS policy on a backup path that deviates from the encoded golden standard is a finding. Without intent, the AI can observe your network but cannot evaluate it. With intent, it can tell the difference between a known exception and a configuration that will cause problems under specific conditions.

Agentic AI

The AI is the reasoning and acting layer. Given a problem statement, it plans which steps to take, uses the digital twin to scope the investigation, executes automations to retrieve live state, compares results against intent, and transparently iterates until it reaches a conclusion. It doesn’t answer once and stop. It keeps going until the goal is met or surfaces a clear handoff point for an engineer.

The practical distinction from a standard AI query: the reasoning is grounded. Every conclusion traces back to actual device data from your network, evaluated against a standard you defined. That’s what eliminates the hallucination problem — not a better model, but a model that has specific, verifiable information to reason against.

What Shifts When All Three Technologies Are in Place

The most immediate effect is on escalation patterns. When AI has real topology, live state, and encoded intent, Tier-1 and Tier-2 engineers can resolve problems that previously required Tier-3 involvement. The AI surfaces the same contextual picture a senior engineer would arrive at after 45 minutes of manual investigation before the engineer has even looked at the ticket.

Less obvious but just as significant: the knowledge concentration problem starts to close. In most network teams, operational expertise is distributed unevenly. Three people understand the network deeply; everyone else escalates to them. Every intent standard encoded into the platform is institutional knowledge that the machine can use, the next engineer can rely on, and the organization retains regardless of turnover.

The reliability problem — the one that makes engineers distrust AI after a few bad experiences — also changes. Hallucination drops sharply when the AI is grounded in organization-specific network context. Engineers start trusting the output because the output starts matching what they know about the network.

Where to Start

Before evaluating any AI capability in network operations, assess where your context layers stand.

Digital Twin Coverage

- Is your topology model updated continuously, or does it drift between audit cycles?

- Does it cover cloud and hybrid infrastructure, or just on premises?

- When an incident occurs, can you immediately scope which devices are on the affected path?

Live State Automation

- Can you retrieve current device state on demand during an active incident?

- Are you dependent on polling-interval data, or can automation pull targeted CLI output at the moment of diagnosis?

Encoded Intent

- Have you defined what “correct” looks like for your most critical application paths?

- Do you have golden configuration standards that automation can compare against live ones?

- Are your most common failure modes encoded anywhere a machine can use them?

None of these layers needs to be fully built before you get value. Each one you add improves the accuracy of everything above it. A single encoded intent standard that covers your highest-risk change type is more useful than a hundred open-ended AI queries against an ungrounded model.

Ready to reduce MTTR by 40–60% and cut change-induced outages with AI that knows your network? Request your personalized demo today to explore NetBrain’s Agentic NetOps platform.